Diffusion Models Already Have a Semantic Latent Space

Kwon, Mingi, Jaeseok Jeong, and Youngjung Uh. “Diffusion Models Already Have A Semantic Latent Space.” In The Eleventh International Conference on Learning Representations.

What story does this work tell?

- [Motivation] Diffusion models lack semantic latent space which is essential for controlling the generative process.

- [Insight] Denoising Diffusion Implicit Model (DDIM) provides nearly perfect reconstruction of original images, hence is suitable for image editing, which renders target attributes on the real image.

- [Motivation] Simply editing the latent variables (i.e., intermediate noisy images) leads to distored images or incorrect manipulation.

- [Motivation] Shifting the noise predicted by the noise predictor at each sampling step does not achive manipulating the generated image.

- [Motivation] More complicated procedures are required: providing guidance in the reverse process, or finetuning models for an attribute.

- [Previous Guidance Methods]

- Image Guidance: Mix the latent variabbles of the guiding image with unconditional latent variables.

- [Limitation] Ambiguous to specify which attribute to reflect.

- [Limitation] Lacks intuitive control for the magnitude of change.

- Classifier Guidance: Manipulate images by imposing gradients of a classifier on the latent variables in the reverse process to match the target class.

- [Limitation] Requires training an extra classifier.

- [Limitation] Computing gradients through the classifier during sampling is costly.

- Finetuning the whole model (DiffusionCLIP): Requires multiple models to reflect multiple descriptions.

- Image Guidance: Mix the latent variabbles of the guiding image with unconditional latent variables.

- [Insight] Generative Adversarial Networds (GANs) inherently provide straightforward image editing in their latent space.

- [Limitation] Finding the exact latent vector of a real image is often challenging and produces unexpected appearance changes.

- [Motivation] It would allow admirable image editing if the diffusion models with the nearly perfect inversion property have such a semantic latent space.

- [Previous Method] Diffusion Autoencoder: introduce a latent embedding of the original image as an additional input to the reverse process.

- [Limitation] Requires training from scratch and does not match with the pretrained diffusion model.

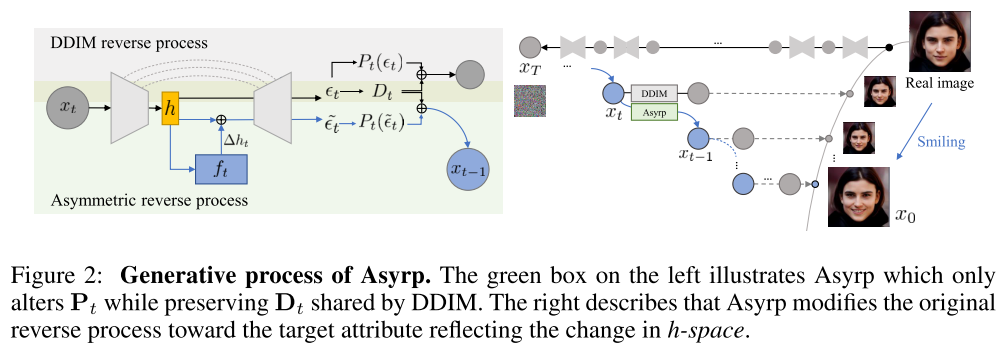

- [Contribution] Asymmetric reverse process (Asyrp), a novel controllable reverse (i.e. backward denoising) process that

- discovers the semantic latent space (“h-space”) of a frozen diffusion model

- enables attribute editing of the original image through modification in the latent space

- [Contribution] h-space, a semantic latent space with the following properties to accomodate semantic image manipulation:

- homogeneity: The same shift in h-space results in the same attribute change in all images.

- linearity: Linear changes in h-space lead to linear changes in attributes.

- compositionality: Adding mulitple changes manipulates the corresponding multiple attributes simultaneously.

- robustness: The changes do not degrade the quality of the resulting images.

- consistency across timesteps: The changes throughout the timesteps are almost identical to each other for a desired attribute change.

- [Contribution] a principled design of the generative process that facilitates versatile editing and quality boosting by two quantifiable measures:

- editing strength of an interval

- quality deficiency at a timestep

- [Conclusion] Asyrp is generally applicable to various architectures (DDPM+, iDDPM, ADM) and datasets (CelebA-HQ, AFHQ-dog, LSUN-church, LSUN-bedroom, METFACES).

- [Conclusion] The discovered h-space is effective to accomodate semantic image manipulation.

- [Conclusion] The principle generation process achieves versatile editing and high quality by measuring editing strength of an interval and quality deficiency at a timestep.

Methodology

Problem Definition

- Goal: Allow semantic latent manipulation of images

generated from given a pretrained and frozen diffusion model. - [Insight] Let

be a predicted noise at timestep in the reverse process. Let $\tilde{\epsilon}{t}^{\theta} \tilde{\epsilon}{t}^{\theta} x_0 x_t$ destruct each other in the reverse process.

Asymetric Reverse Process (Asyrp)

- Formalization of Asyrp:

- Title: Diffusion Models Already Have a Semantic Latent Space

- Author: Der Steppenwolf

- Created at : 2025-06-06 12:55:36

- Updated at : 2025-06-22 20:46:50

- Link: https://st143575.github.io/steppenwolf.github.io/2025/06/06/Diffusion-Models-Already-Have-a-Semantic-Latent-Space/

- License: This work is licensed under CC BY-NC-SA 4.0.

Comments